YAK: 3D Reconstruction in ROS2

/So why YAK?

It’s difficult for robots to perceive objects in the real world, especially when those objects are shiny, previously unseen, or (gasp!) both! We are excited to introduce Yak, an open-source GPU-accelerated ROS2 package which addresses some of these challenges using Truncated Signed Distance Fields. The Southwest Research Institute booth demo from the Automate 2019 conference serves as a case study for a ROS2 system that integrates Yak into its perception and motion planning pipeline.

A bit of technical background

A Truncated Signed Distance Field (TSDF) is a 3D voxel array representing objects within a volume of space in which each voxel is labeled with the distance to the nearest surface. The TSDF algorithm can be efficiently parallelized on a general-purpose graphics processor, which allows data from RGB-D cameras to be integrated into the volume in real time.

Numerous observations of an object from different perspectives average out noise and errors due to specular highlights and interreflections, producing a smooth continuous surface. This is a key advantage over equivalent point-cloud-centric strategies, which require additional processing to distinguish between engineered features and erroneous artifacts in the scan data. The volume can be converted to a triangular mesh using the Marching Cubes algorithm and then handed off to application-specific processes.

Machined Al Test Part

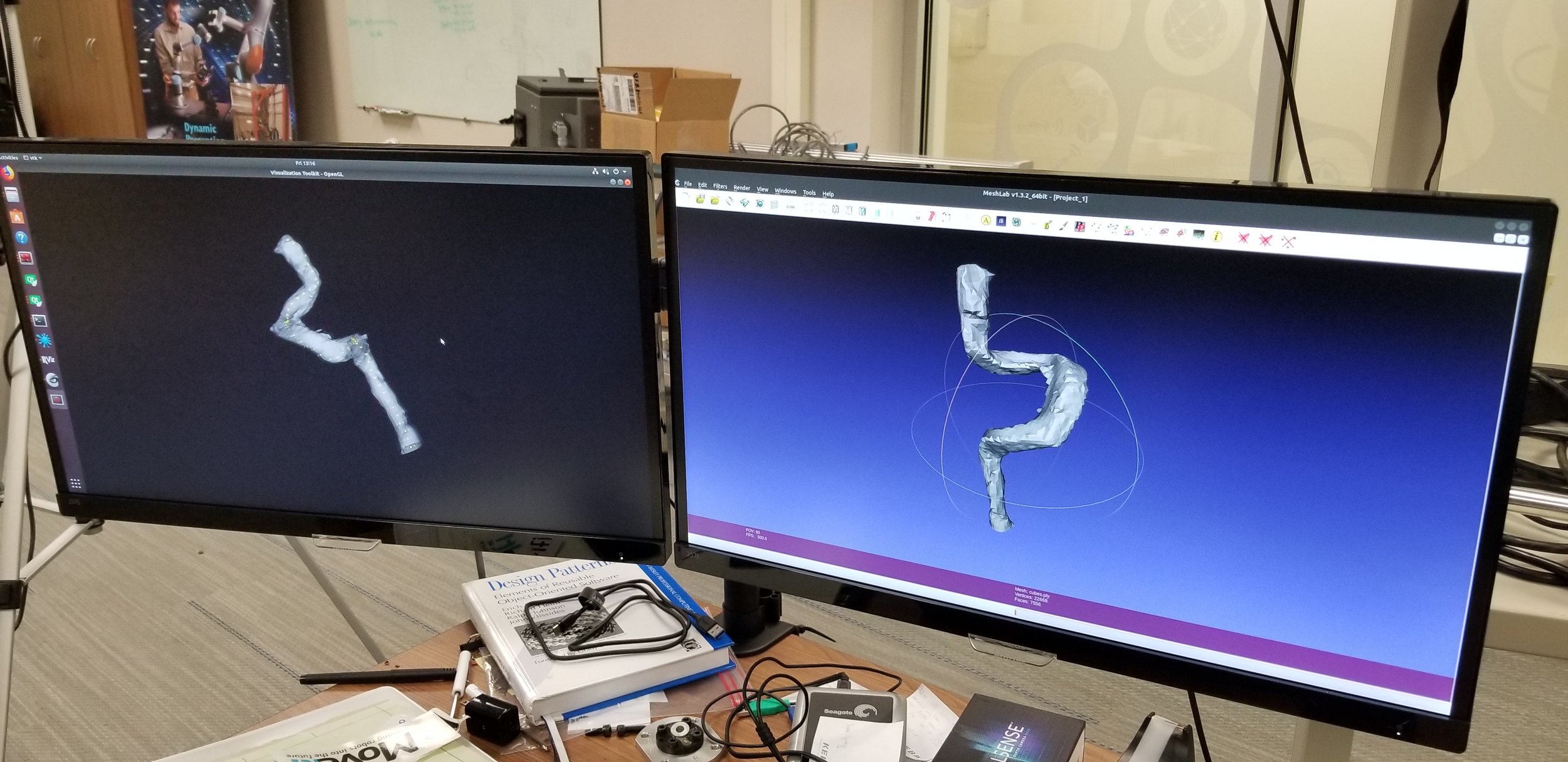

YAK Reconstruction of Machined Al Part

Reconstructing in the real world

My group at Southwest Research Institute has been working with TSDFs since Spring 2017. This year we started work on our first commercial projects leveraging TSDFs in industrial applications. We developed our booth demo at the 2019 Automate conference to provide an open-source example of this type of system.

The set of libraries we use in these applications is called Yak (which stands for Yet Another KinectFusion, in reference to the substantial prior history of TSDF algorithms). Yak consists of two repositories: a ROS-agnostic set of core libraries implementing the TSDF algorithm, and a repository containing ROS packages wrapping the core libraries in a node with subscribers for image data and services to handle meshing and resetting the volume. Both ROS and ROS2 versions of this node are provided. A unique feature of Yak compared to previous TSDF libraries is that the pose of the sensor origin can be provided through the ROS tf system from an outside source such as robot forward kinematics or external tracking, which is advantageous for robotic applications since it leverages information that is generally already known to the system.

The test system, which is in the pipeline for open-source release as a practical demonstration, uses a Realsense D435 RGB-D camera mounted on a Kuka iiwa7 to collect 3D images of a shiny metal tube bent into a previously-unseen configuration. The scans are integrated into a TSDF volume as the robot moves the camera around the tube.

The resulting mesh is processed by a ROS node using surface analysis functions from VTK to extract waypoints and tangential vectors along the length of the tube. These waypoints constitute the seed trajectory for a motion plan generated by our Descartes and Tesseract libraries which sweeps a ring along the tube while avoiding collision. Camera and turntable extrinsic calibration was performed using a nonlinear optimization function from the robot_cal_tools package.

A picture of the reconstructed mesh used to generate a robot trajectory is below. Additional information about the development of this demo (plus some neat video!) is available here. As ROS2 training is integrated into the ROS-I Consortium Americas curriculum, we look forward to sharing more lessons learned about applying Yak towards similar applications.

What next?

Yak is open-source as of July 2019. While development is ongoing and we anticipate that the library APIs will continue to evolve, we encourage interested parties to check it out at github.com/ros-industrial/yak and github.com/ros-industrial/yak_ros .